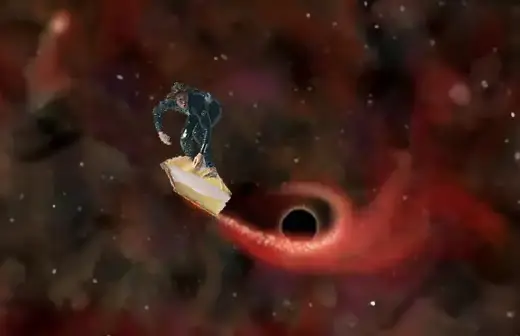

Surfing the Singularity

Surfing the web for The Singularity gives me a gnarly headache. No doubt a reaction to asking my brain to find ways of replacing itself.

The technological singularity is the hypothesized creation of smarter-than-human entities who rapidly accelerate technological progress beyond the capability of human beings to participate.

Vernor Vinge popularized the term "singularity" in this context, observing that, just as our model of physics breaks down when it tries to model the singularity at the center of a black hole, our model of the world breaks down when it tries to model a future that contains entities smarter than human.

Both intriguing and frightening, my approach to the Singularity is to treat it like a dessert tray, the further it is away, the less I am infatuated by it.

Through the exponential growth of computer processing power, biotechnology or some other means, futurists have predicted that The Singularity could arrive as early as 2050.

Here are a few reasons why The Singularity might arrive later than expected.

Software is Hard

I agree that by mid-century, hardware or bioware could exist that is capable of housing a superintelligent entity. What we will not have by that time, is the software to utilize it.

Put simply, until my computer is smarter than a trash can, (a real-life receptacle never asks--Do you really want to throw that away?), I'm not worried about programmers developing a superintelligence.

Brain Swarming

It has been suggested that before AI experts understand the brain well enough to make up their minds, communities of "dumb" computers could be linked together to program themselves into a superintelligence.

To this scenario, I submit my last family reunion as one example where adding more brains in the room did not increase the overall intelligence of the group.

Good Warning, Dave?

Futurists and science fiction writers are often optimistic when putting a date on future technology. Example: Stanley Kubrick's & Arthur C. Clarke's 2001, A Space Odyssey (1968).

Better Me, Then You

What I cannot push past my comfort date, is the enhanced human brain. It is conceivable that genetics, drugs and/or brain-machine interfaces, could help create a superintelligent brain by the year 2050. Would you mind terribly, if I go first?

By the Way

The path to the pinnacle of humanity is a slippery slope. Is society ready for the precursive power presented by the building blocks of the Singularity--computers, nanotechnology, biotechnology, artificial intelligence and information?

Everywhere I look I see vanity, greed, hunger and waste (I'm writing this from a coffee house at the airport). Undesirable human traits could fuel global catastrophes in the technologically advanced civilization we are becoming.

Long before the arrival of the Singularity, we will need to change our ways. A benign, compassionate and sharing civilization has the best chance to survive the flood of information and technology that is headed our way.

Singularity Articles

I search the internet daily for new articles from around the world that interest me or I think will interest you. My hope is that it saves you time or helps students with their assignments. Listed by most recent first, dating back to 2005.

-

Vernor Vinge, father of the tech singularity, has died at age 79 from Ars Technica

-

The Singularity — When We Merge With AI — Won’t Happen from Mind Matters

-

Exploring non-anthropocentric aspects of AI existential safety from LessWrong

-

What Is the AI Singularity, and Is It Real? from How-To-Geek

-

William Dembski: Destroy the AI Idol Before It Destroys Us from Mind Matters

-

Many Artificial Intelligence Researchers Think There's A Chance AI Could Destroy Humanity from IFLScience

-

Beyond the AI Singularity: Are we ready for what comes next? from The Business Standard

-

Could Artificial Intelligence Ever Become Truly Self-Aware? from Grunge

-

OpenAI Demos a Control Method for Superintelligent AI from IEEE Spectrum

-

Could AI become conscious? Physicists and neuroscientists search for answers from GeekWire

-

Are We Approaching the Singularity? from Mind Matters

-

Why ChatGPT isn’t conscious – but future AI systems might be from The Conversation

-

Sentient AI will never exist via machine learning alone from Cybernews

-

How Studying Animal Sentience Could Help Solve the Ethical Puzzle of Sentient AI from Singularity Hub

-

Some AI experts have begun to confront the 'Singularity.' What they see scares them. from Popular Science

-

The singularity is here, again from Axios

-

How to develop artificial super-intelligence without destroying humanity from The Guardian

-

If we’re going to label AI an ‘extinction risk’, we need to clarify how it could happen from The Conversation

-

I’m a rational optimist. Here’s why I don’t believe in an AI doomsday. from Big Think

-

Artificial intelligence could lead to extinction, experts warn from BBC News

-

The myth of machine consciousness makes Narcissus of us all from Psyche

-

How to stop runaway AI from Freethink

-

When will AGI arrive? Here’s what our tech lords predict from TNW

-

Technological Singularity: Preparing for an Unpredictable Future from IndraStra

-

Can artificial intelligence ever be sentient? from BBC Reel

-

Experts say the AI singularity could be here in the next two decades from PC Gamer

-

Will The Singularity rescue us from death? from Freethink

-

Generative AI may only be a foreshock to AI singularity from VentureBeat

-

The CEO of the company behind AI chatbot ChatGPT says the worst-case scenario for artificial intelligence is 'lights out for all of us' from Business Insider

-

Humanity May Reach Singularity Within Just 7 Years, Trend Shows from Popular Mechanics

-

Conscious Robots Will Be 'Bigger Than Curing Cancer,' Scientists Say from Popular Science

-

Rodney Brooks on the Singularity: Neither Heaven nor Hell video

-

A New Brain Model Could Pave the Way for Conscious AI from SciTechDaily

-

The new Turing test: Are you human? from ZDNet

-

Oxford’s John Lennox Busts the Computer Takeover Myth from Mind Matters

-

The Real Threat From A.I. Isn’t Superintelligence. It’s Gullibility. from Slate

-

Why AI will never rule the world from Digital Trends

-

Researchers Say It'll Be Impossible to Control a Super-Intelligent AI from ScienceAlert

-

Would artificial superintelligence lead to the end of life on Earth? It's not a stupid question from Salon

-

Superintelligence: How A.I. will overcome humans from Big Think

-

Why we talk about computers having brains (and why the metaphor is all wrong) from The Conversation

-

AI vs. humans, part II from SmartBrief

-

What if an Artificial Intelligence program actually becomes sentient? from NPR

-

If Artificial Intelligence Were to Become Sentient, How Would We Know? from Singularity Hub